How Small Bugs Become Big Problems

Most major failures don’t start with dramatic mistakes. They begin with something small: an off-by-one error, a confusing UI label, a missing null check, an undocumented assumption, a silent retry loop. These “little” bugs often look harmless in isolation, especially when they don’t immediately crash a system or trigger alarms. But in real environments—where systems interact, scale changes behavior, and humans adapt to quirks—small defects can compound into outsized damage.

Why small bugs are rarely isolated

A bug is rarely just a single line of code behaving incorrectly. It’s usually a mismatch between an assumption and reality. Maybe the developer assumed a value is always present, a time zone is consistent, a network call is reliable, or an input is validated upstream. As long as conditions match the assumption, the bug stays quiet. When conditions drift—as they always do—the bug surfaces in a context where its impact is larger and harder to diagnose.

In modern systems, even a “tiny” defect can influence:

- Data integrity (incorrect records that spread to downstream systems)

- Security (small validation gaps that become exploitable)

- Reliability (minor error handling issues that trigger retries and overload)

- User behavior (workarounds that become informal processes)

- Operational costs (support tickets, manual cleanup, incident response)

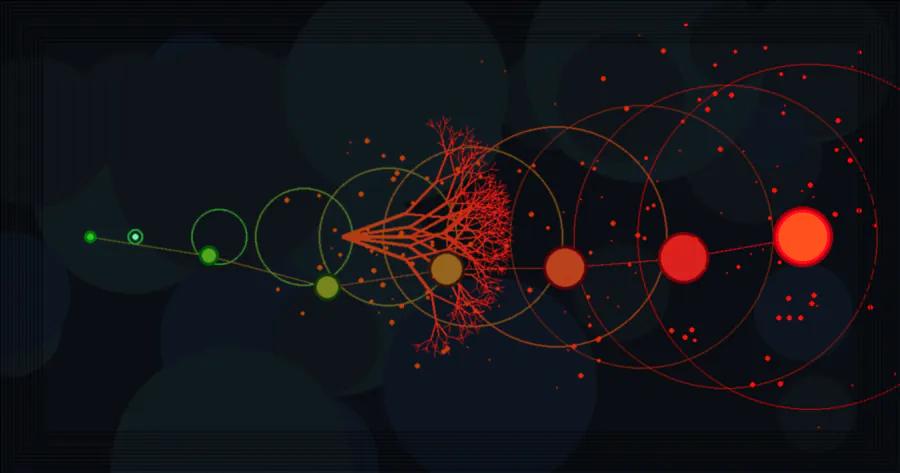

The compounding effect: scale, time, and coupling

Three forces amplify small bugs into big problems:

-

Scale

A bug that affects 0.1% of requests feels negligible at 1,000 requests per day. At 100 million requests per day, it becomes a steady stream of failures, retries, and corrupted states. Scale turns rare events into routine events.

-

Time

Small inaccuracies accumulate. A rounding error in billing might be pennies per transaction—until it becomes a meaningful discrepancy over months. A slowly growing memory leak may look fine in testing but triggers outages after weeks of uptime.

-

Coupling

Systems are interconnected. One service’s “minor” incorrect value becomes another service’s assumption. Data pipelines, caches, analytics, fraud detection, and customer support tools all depend on upstream correctness. Coupling lets a tiny defect travel far.

Common pathways from tiny bug to major incident

1) Silent failures and degraded correctness

The most dangerous bugs are the ones that fail quietly. A system that returns a default value instead of raising an error can keep running while producing wrong results. By the time the problem is noticed, it may have affected thousands of records, reports, or decisions.

Silent failures tend to produce big problems because they bypass alerting. If nothing breaks loudly, no one investigates early.

2) Retries that create storms

Retries are a reliability tool that can turn into a reliability threat. A small bug—like treating a permanent error as transient—can cause clients to retry aggressively. Under load, retries multiply traffic, increase latency, and push healthy systems into failure. What started as a minor edge case becomes a cascading outage.

3) “Just one exception” that becomes a pattern

A code path labeled “should never happen” eventually happens. Real data is messy, integrations change, and unexpected inputs arrive. If that path logs a warning and continues, it may seem harmless until it becomes frequent. Teams then normalize the warning, stop trusting logs, and miss the moment it crosses from oddity to incident.

4) Workarounds becoming institutionalized

When users encounter small friction—an unclear message, an annoying validation rule, a missing feature—they create workarounds. Workarounds often become informal process: spreadsheets, manual re-entry, special instructions, or “click this twice.” Over time, the workaround shapes behavior, and the underlying bug becomes embedded in operations. Fixing it later becomes risky because people now depend on the behavior, even if it’s wrong.

5) Data corruption that spreads downstream

Incorrect data behaves like contamination. It replicates into caches, search indexes, analytics warehouses, ML features, and audit logs. Even after the root bug is fixed, cleanup may require backfills, reprocessing, and carefully coordinated corrections. A small validation bug can therefore create a large, long-lived recovery project.

6) Security gaps hiding in edge cases

Many security incidents begin as “minor” inconsistencies: a missing authorization check on one endpoint, a flawed rate limit, a parsing ambiguity, an overlooked file upload rule. Attackers look for precisely these edges. What seems like a narrow case can become a wide breach if it provides a foothold.

Why small bugs persist

If small bugs can become so costly, why do they remain in systems?

- They’re hard to reproduce: intermittent timing issues, race conditions, and environment differences hide defects.

- They don’t hurt immediately: the damage may be delayed, distributed, or masked by compensating processes.

- They’re “not prioritized”: teams often optimize for visible features and urgent incidents, leaving subtle correctness debt behind.

- They’re socially normalized: once people expect a glitch, it stops feeling like a bug and starts feeling like the system.

- They require cross-team coordination: a small defect that spans services, vendors, or departments is harder to fix than to tolerate.

Preventing small bugs from turning into big problems

Make failures loud, specific, and actionable

Prefer clear errors over silent defaults when correctness matters. When a fallback is necessary, emit structured logs and metrics so the system’s “degraded mode” is visible. The goal is not to crash everything—it’s to ensure you can see and measure the compromise.

Instrument the edges, not just the happy path

Measure unusual states: validation failures, retries, timeouts, partial successes, and dead-letter queues. Small increases in these signals are often the earliest warning signs. A bug rarely jumps from zero to catastrophe; it climbs.

Use guardrails: timeouts, backoff, and circuit breakers

Retries should have exponential backoff, jitter, and hard caps. Timeouts should be explicit. Circuit breakers should prevent a struggling dependency from being hammered into total failure. These patterns stop a small bug from amplifying through traffic multiplication.

Treat data as a product with contracts

Define schemas, validate at boundaries, and version contracts when formats change. If downstream systems rely on a field, treat changes as breaking unless proven otherwise. “It still parses” is not the same as “it remains correct.”

Reduce coupling and isolate blast radius

Decouple with queues, idempotent operations, and clear ownership of responsibilities. Limit the impact of a bug by ensuring failures are contained within a component, not propagated across the entire ecosystem.

Fix the small stuff strategically

Not every small bug deserves immediate attention, but the ones that touch money, security, safety, or core data integrity should be treated as high leverage. A useful question is: If this bug’s frequency increased 100×, what would happen? If the answer is “incident,” it’s worth addressing early.

Build feedback loops that shorten discovery

Automated tests help, but so do canary releases, feature flags, and quick rollbacks. When the cost of change is low, teams can fix small bugs before they become institutionalized. When releasing is risky, bugs linger and accumulate.

Small bugs are signals

Small bugs often point to deeper issues: unclear requirements, ambiguous data ownership, missing observability, or brittle integrations. Treating them as mere annoyances misses their value as early warnings. A system that routinely produces minor surprises is a system that will eventually produce a major one.

The best teams don’t aim for a world without bugs; they aim for a world where bugs are detected quickly, failures are contained, and correctness is defended at the boundaries. That’s how small problems stay small.