In real-world software, things go wrong: networks flap, third-party services degrade, data arrives malformed, and user behavior surprises you. In that reality, logs are not “nice to have.” They are the primary record of what a system did, why it did it, and what happened next. Good logging turns software from a black box into an observable system that teams can operate, secure, and improve.

Logs are your system’s memory

When an incident happens, you rarely have the luxury of reproducing it in a debugger. The environment is different, the data is different, and the failure may be intermittent. Logs provide a time-stamped narrative of events that lets you reconstruct what happened after the fact.

In practice, logs help answer questions like:

- Which request triggered the error, and what inputs did it carry?

- What code path was taken, and which downstream dependencies were called?

- How long did each step take, and where did latency spike?

- Did the system retry, circuit-break, or fallback? What was the outcome?

Debugging without logs is guessing

Many teams learn the hard way that exceptions alone are not enough. A stack trace might show where code failed, but not the business context around it: user identifiers, feature flags, tenant, request IDs, payload sizes, dependency response codes, or the sequence of events leading up to failure.

High-quality logs reduce mean time to detect (MTTD) and mean time to resolve (MTTR) by making failures diagnosable. Instead of “it crashed,” you can see “payment capture failed due to a 502 from the gateway after three retries; the request used token type X and came from region Y.”

Logs connect the dots across distributed systems

Modern applications are often composed of multiple services, queues, and third-party APIs. One user action might trigger a chain of events across several systems. Without consistent logging, you end up with isolated fragments rather than an end-to-end story.

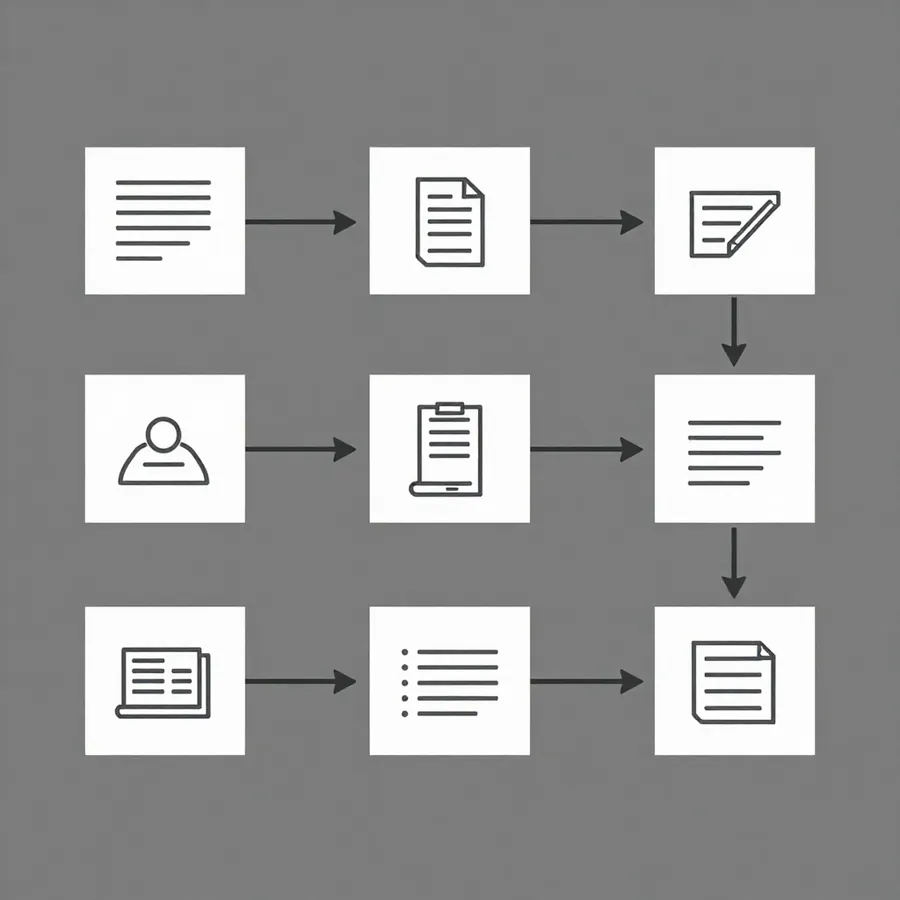

Two practices make logs especially valuable in distributed environments:

- Correlation IDs: Generate or propagate a request/trace ID through all services so you can retrieve all related logs with one query.

- Structured logs: Emit logs as key-value data (for example, JSON) so fields like service, environment, tenant, requestId, errorCode, and latencyMs can be filtered and aggregated reliably.

Logs help you operate, not just develop

In real projects, success is not just shipping features; it’s keeping the system healthy. Logs support operations by enabling:

- Alerting: Trigger alerts on error rates, unusual patterns, or repeated failures rather than waiting for users to complain.

- Capacity planning: Understand traffic patterns, peak times, and hotspots to plan scaling and infrastructure changes.

- Performance tuning: Identify slow queries, bottlenecks, and the specific endpoints or tenants causing load.

- Release validation: Compare baseline behavior before and after deployments to catch regressions quickly.

Logs are essential for security and compliance

Security incidents often look like normal behavior until you analyze patterns over time. Logs provide the audit trail needed to detect suspicious activity and to investigate incidents responsibly.

Common security and compliance uses include:

- Tracking authentication events (logins, failures, password resets, MFA challenges).

- Recording authorization decisions (access granted/denied, role changes).

- Auditing sensitive actions (exports, deletions, privilege escalation).

- Supporting forensic investigations with time-ordered evidence.

At the same time, security requires discipline: never log secrets such as passwords, API keys, access tokens, or full payment card data. Treat logs as sensitive data stores.

Logs improve product decisions

Not all logs are for incidents. Application logs and event logs can reveal how features are used, where users abandon flows, and which edge cases are most common. When combined with analytics thoughtfully, logs can help prioritize engineering work based on real user impact.

The key is to separate concerns: operational logs should remain focused on system behavior and troubleshooting, while product events should be clearly defined, consistent, and privacy-aware.

What “good logging” looks like

Teams often start by “adding more logs,” then discover they’ve created noise. Effective logging is less about volume and more about signal. Good logs are:

- Structured: Prefer machine-parsable fields over free-form text.

- Consistent: Use standard field names, severity levels, and formats across services.

- Context-rich: Include identifiers (request ID, user/tenant ID), key parameters, and outcomes.

- Appropriately leveled: Debug for development, info for normal high-level events, warn for recoverable issues, error for failures requiring attention.

- Actionable: Each error should suggest what failed and where to look next (dependency name, status code, retry count, timeout value).

- Privacy-safe: Avoid sensitive data; sanitize inputs; apply retention policies.

Common logging pitfalls in real projects

- Logging without correlation: You can’t trace a user request across services, so incidents become archaeology.

- Too much noise: Excessive info-level logs bury the important signals and increase storage costs.

- Missing context: Errors logged without IDs, parameters, or dependency details can’t be acted on.

- Inconsistent severity: Everything is “error,” causing alert fatigue, or nothing is, causing blind spots.

- Logging sensitive data: Creates security risk and compliance exposure.

- No retention or sampling strategy: Costs balloon, and teams either delete too aggressively or keep too much.

A practical approach to logging in production

If you want logs to pay off in real projects, treat them as a product with standards and ownership. A pragmatic baseline includes:

- Adopt structured logging across services and define a shared schema for core fields.

- Propagate correlation IDs from the edge (API gateway or frontend) through every service call.

- Define a severity policy so levels mean the same thing everywhere.

- Standardize error logging (error code, dependency, retry count, root cause when known).

- Protect privacy with redaction, allow-lists for logged fields, and secret scanning.

- Set retention and sampling based on environments and log types (debug vs. audit vs. operational).

- Make logs searchable with centralized aggregation and a queryable interface shared by dev and ops.